Fasten your safety belt, and departing …..

Fasten your safety belt, and departing …..

1. Create a pipeline job.

2. Setup your SCM and build from Jenkinsfile (sample provided below).

2. Setup your SCM and build from Jenkinsfile (sample provided below).

////////////////////

//Sample Jenkinsfile

////////////////////

def AGENT_LABEL = "slave-${UUID.randomUUID().toString()}"

def K8S_DEPLOYMENT_FILE = "k8s-service.yml"

// This is where the dynamic slave magic happen,

// it launch the agent using randomly generated number as k8s pod

pipeline {

agent {

kubernetes {

label "${AGENT_LABEL}"

defaultContainer 'jenkins-jnlp-slave-docker'

yamlFile 'k8s-jnlp-slave.yml'

}

}

stages {

stage('Build artifacts') {

steps {

// maven3 need to pre-configure in Jenkins

// mavenSettingsConfig is optional when you have customize settings.xml file using Config File Management plugin

withMaven(maven: 'maven3', mavenSettingsConfig: 'maven-settings') {

sh "mvn clean install -Dmaven.test.skip=true"

}

}

}

stage('Build Docker Image') {

steps {

script {

withEnv(["WORKSPACE=${pwd()}"]) {

//docker-credential is credential set in Jenkins to authenticate against your docker registry

docker.withRegistry("YOUR_NEXUS_REGISTRY", 'docker-credential') {

def image = docker.build("YOUR_IMAGE_NAME", "PROJECT_DIR/Dockerfile .")

image.push()

}

}

}

}

}

stage('Run kubernetes deployment') {

steps {

// kubeconfig is your kube config file which need to pre-configure in Jenkins as secret file

// check step 5 below

withKubeConfig([credentialsId: 'kubeconfig']) {

sh "kubectl create -f ${K8S_DEPLOYMENT_FILE}"

}

}

}

}

}

##########################

#Sample k8s-jnlp-slave.yml

##########################

apiVersion: v1

kind: Pod

metadata:

labels:

name: jenkins-jnlp-slave-docker

spec:

containers:

- name: jenkins-jnlp-slave-docker

image: d1ck50n/jenkins-jnlp-slave-docker:latest

command: ['cat']

tty: true

imagePullPolicy: Always

volumeMounts:

- name: dockersock

mountPath: "/var/run/docker.sock"

imagePullSecrets:

- name: tntcred

volumes:

- name: dockersock

hostPath:

path: /var/run/docker.sock

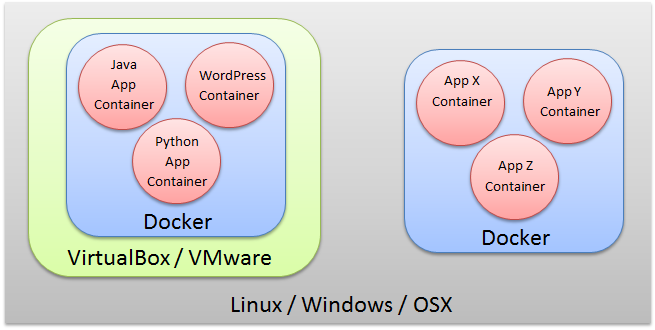

Jenkins slave is docker, and we will use it to build our docker images, which means we will need to have a docker engine inside Jenkins slave docker (a.k.a docker in docker). Since I’m not able to make use of ready made docker image available @ docker hub as my jenkins slave (I need jdk, maven, kubectl… too as my jenkins slave) , so I created my own based on jenkins/jnlp-slave image.

To achieve DoD, we map the docker.sock from the host to our container (‘dockersock’ in k8s-jnlp-slave.yml).

3. Install plugins as below:

4.Create jenkins kubernetes plugin by adding new entry.

4.Create jenkins kubernetes plugin by adding new entry.

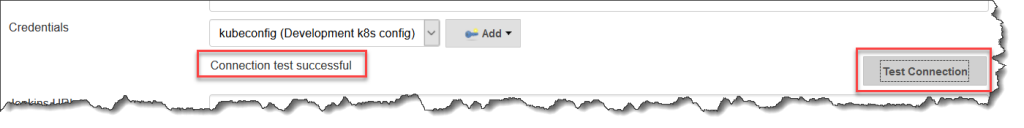

5. Fill in configuration according to your kubernetes cluster or use your kube config file by adding credential as highlighted below:

6. Regardless you configure manually or using your customize kube config file, you need to test the connection.

7. Build your job and you should see a new node created and start building.

7. Build your job and you should see a new node created and start building.

Source available @ github.

Source available @ github.

Credits to authors and websites below:

- https://jpetazzo.github.io/2015/09/03/do-not-use-docker-in-docker-for-ci/

- https://medium.com/@gustavo.guss/jenkins-building-docker-image-and-sending-to-registry-64b84ea45ee9

- https://medium.com/lucjuggery/about-var-run-docker-sock-3bfd276e12fd

- https://medium.com/hootsuite-engineering/building-docker-images-inside-kubernetes-42c6af855f25

- https://estl.tech/accessing-docker-from-a-kubernetes-pod-68996709c04b